Introduction: What is Explainable AI?

Artificial Intelligence (AI) has rapidly become a part of our lives today. When you see a recommendation on your mobile phone, apply for a loan online, or hear an AI-based diagnosis at the hospital, AI is at work somewhere. AI systems analyze large amounts of data and make decisions, making many tasks fast and efficient.

But there’s a significant problem here. Often, AI makes a decision, but it’s unclear why. For example, if a bank’s AI system rejects your loan application, the user often doesn’t understand the reason for the rejection. This situation is called a “black box problem” in the AI world.

A black box means that the processing of the system is not transparent to the user. The AI model takes in input data and produces output, but it’s difficult to understand how the decision was made.

To address this challenge, the concept of Explainable AI (XAI) emerged. The main objective of Explainable AI (XAI) is to make the decisions of AI systems understandable to humans. This means that if the AI makes a prediction or recommendation, it should also explain the factors that led to that decision.

Let’s understand this with a simple example.

Suppose an AI system in a bank is deciding on loan approval. If the loan is rejected, Explainable AI (XAI) can explain the reasons for the rejection—such as a low credit score, unstable income, or previous loan history.

Similarly, in the healthcare sector, if an AI diagnoses a disease, Explainable AI (XAI) can help the doctor understand which medical data points the AI considered to arrive at that result.

Many companies also use AI tools in the hiring process. In such cases, Explainable AI (XAI) can clarify which factors were responsible for shortlisting or rejecting a candidate.

In simple terms, the aim of Explainable AI (XAI) is not just to make AI powerful, but also to make it transparent, trustworthy, and human-friendly. Only when people can understand AI’s decisions will they be able to truly trust that technology.

This is why Explainable AI (XAI) is being considered a crucial part of future-ready and responsible AI systems in today’s AI industry.

AI’s Black Box Problem

AI technology is very powerful, but it also faces a significant challenge known as the “Black Box Problem.” When we talk about modern AI systems, they often use complex models like deep learning and neural networks. These models can analyze large data sets and make accurate predictions, but their decision-making process is difficult for humans to understand.

Simply put, an AI system takes input data, processes it through its algorithm, and then produces an output or prediction. However, the calculations that went into reaching that prediction and which factors were most important—this information is often unclear. This is why many experts call AI models “black boxes.”

A black box means that we can see the system’s input and output, but how the decisions were made in between is not completely transparent. Explainable AI (XAI) becomes crucial to understanding and solving this problem. The primary objective of Explainable AI (XAI) is to make AI decisions understandable to humans.

Let’s understand this with a simple example. Suppose a doctor in a hospital is diagnosing a patient with the help of an AI system. The AI system can analyze the patient’s medical data, test reports, and symptoms to predict which disease the patient may be suffering from. However, if the AI simply provides the result without explaining why it reached that conclusion, it may be difficult for the doctor to fully trust that decision.

This is where the need for Explainable AI (XAI) comes into play. If the AI system also explains which symptoms or reports it prioritized when making the diagnosis, it becomes easier for the doctor to verify the decision.

Similarly, the use of AI is increasing in many industries, such as banking, healthcare, hiring, and insurance. But until AI systems are transparent, it will be difficult to build user trust. Therefore, AI experts are now working on models that not only provide predictions but also explain the logic behind their decisions.

This is why Explainable AI (XAI) is becoming an increasingly important part of AI development today. This technology is a big step towards making AI systems more transparent, reliable, and human-friendly.

Why is Explainable AI (XAI) important?

Artificial Intelligence is rapidly being used in every industry today. Banking, healthcare, online platforms, and many businesses are using AI to make decisions. But when AI makes an important decision, a question inevitably arises in people’s minds: why did AI make this decision?

This is why Explainable AI (XAI) has become so important today. Its primary objective is to make the decisions of AI systems understandable to humans, so that people can trust AI technology and use it responsibly.

Below are some important reasons why Explainable AI (XAI) is essential.

1️⃣ Trust Building

The most important factor in adopting any technology is trust. If AI makes a decision but the reasoning is unclear, users may not be able to fully trust the system.

For example, if a bank’s AI system rejects a person’s loan application, the user will want to know the reason for the rejection. If an AI system can explain why a loan was rejected due to income, credit score, or financial history, the user understands the decision.

This is where Explainable AI (XAI) helps build trust.

2️⃣ Transparency

It is crucial for AI systems to have transparent decision-making processes. Transparency means it should be clear how and what factors the AI is using to make decisions.

When AI’s decision-making process is clear, developers, businesses, and users can all understand whether the system is working correctly. Explainable AI (XAI) plays a key role in increasing this transparency.

3️⃣ Legal Compliance

Today, new regulations and rules are being developed in many countries regarding AI systems. A major objective of these regulations is to ensure that AI systems operate in a fair and transparent manner.

Explaining decisions is crucial when using AI in sensitive sectors such as finance, healthcare, and insurance. For example, if an AI system rejects an insurance claim, the company may need to explain the reason for the rejection.

In such situations, Explainable AI (XAI) helps companies meet legal requirements.

4️⃣ Bias Detection

AI models can sometimes make biased decisions. Bias means the system may exhibit unfair behavior toward certain people or data.

For example, if a hiring AI system is trained on biased data, it may reject some candidates unfairly. If the AI doesn’t explain its decision, this bias would be very difficult to detect.

But Explainable AI (XAI) can help us understand which factors the AI considered most heavily when making decisions. This helps developers identify and correct biases in the system.

A Brief Conclusion

Simply put, Explainable AI (XAI) makes AI systems more transparent, trustworthy, and responsible. When AI clearly explains its decisions, it becomes easier for users, businesses, and regulators alike to trust the technology.

How does Explainable AI work?

Now the question arises: how does Explainable AI (XAI) actually work? If AI models are so complex, how are their decisions explained?

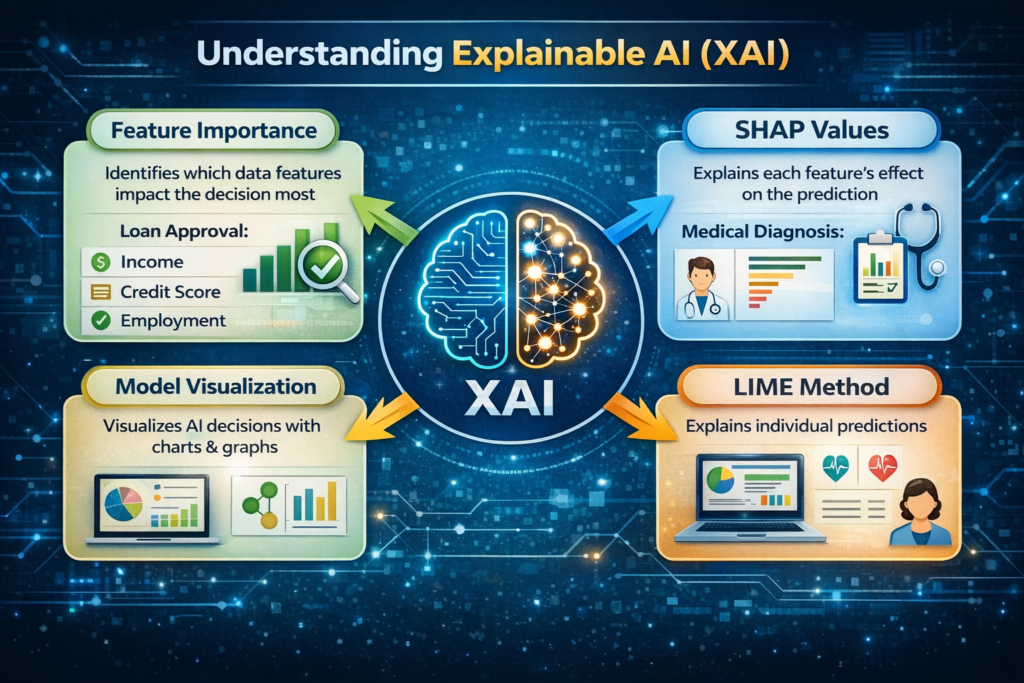

In fact, Explainable AI (XAI) uses various techniques to determine which factors led an AI system to make a prediction or decision. The purpose of these techniques is to make AI’s decision-making process more clear and understandable for humans.

One of the most important techniques is Feature Importance.

1️⃣ Feature Importance

“Feature importance” means understanding which data factors are most influencing an AI model’s decision.

Whenever an AI model makes a prediction, it analyzes multiple data points or features. But not every feature is equally important. Some features influence the decision more, while others have less influence.

This is where Explainable AI (XAI) attempts to show which features are playing the most important role in AI decisions.

Let’s understand this with a simple example.

Suppose an AI system in a bank is making a loan approval decision.

When a person applies for a loan, the AI analyzes several factors, such as:

The person’s income

Their credit score

Their employment status

Previous financial history

Now, if the AI system approves or rejects a person’s loan, Explainable AI XAI can show which factors were most important in the decision.

For example, the AI might indicate that a low credit score was the main reason for loan rejection, while income and employment status had a lesser impact.

In this way, Explainable AI XAI helps both users and businesses understand the logic behind the AI system’s decision. This makes the decision process more transparent and increases people’s trust in AI technology.

Simply put, the feature importance technique is a crucial part of explainable AI (XAI), helping to simplify and understand complex AI decisions.

Real-World Use Cases of Explainable AI

Today, Artificial Intelligence isn’t limited to research or technology labs. It’s now being used in many real-world sectors, such as healthcare, banking, transportation, and security. But when AI is involved in a critical decision, it’s crucial for people to understand why that decision was made. This is why Explainable AI (XAI) is rapidly gaining importance.

The purpose of Explainable AI (XAI) isn’t just to provide a prediction, but also to explain the reasons behind the AI’s results. This makes the technology more transparent and trustworthy. Let’s explore some real-world examples to understand how Explainable AI (XAI) works in different industries.

Healthcare

The use of AI in the healthcare sector is rapidly increasing. Many hospitals and research centers are using AI systems for disease detection, medical imaging analysis, and diagnosis.

But healthcare is a very sensitive field, so it’s crucial for doctors to understand how AI has diagnosed a disease.

This is where Explainable AI (XAI) helps. It can show doctors which factors in a patient’s medical data, symptoms, or test reports were most important in the AI decision. This allows doctors to better understand the AI results and make informed medical decisions.

Banking

In the banking industry, AI is used in tasks such as loan approval, credit scoring, and risk analysis. When a person applies for a loan, the AI system analyzes their financial information to decide whether the loan should be approved.

But if the loan is rejected and the reason is unclear, it can cause confusion for the user.

In such a situation, Explainable AI (XAI) can explain the reason behind the loan rejection—such as a low credit score, unstable income, or high financial risk. This makes the banking process more transparent and increases customer trust.

Autonomous Vehicles

AI technology is also used in self-driving cars, or autonomous vehicles. These vehicles use sensors, cameras, and AI algorithms to understand road conditions and make driving decisions.

But for safety, it’s crucial to understand why the AI took a particular action in a situation—such as suddenly braking or changing direction.

Explainable AI (XAI) helps developers and engineers understand why an AI system made a particular decision in a given situation. This can make autonomous vehicle technology more secure and reliable.

Fraud Detection

Banks and financial institutions also use AI systems for fraud detection. AI systems can analyze millions of transactions and identify suspicious activities.

But if a transaction is blocked as fraudulent, it’s crucial to understand why.

This is where Explainable AI (XAI) comes in. It can explain what pattern or unusual activity led the AI to consider the transaction suspicious. This can help banks improve fraud detection systems and reduce false alerts.

Conclusion

These examples clearly demonstrate that Explainable AI (XAI) isn’t just a technical concept; it’s playing a crucial role in real-world applications. When AI systems explain their decisions clearly, the technology becomes more transparent, reliable, and user-friendly.

Benefits of Explainable AI

Today, as AI technology is rapidly being used in every industry, it’s crucial for people to understand the decisions of AI systems. This is why Explainable AI (XAI) is considered a crucial part of modern AI development.

Explainable AI (XAI) isn’t just about explaining AI models; it also helps make the technology more transparent, reliable, and trustworthy. Below are some key benefits of Explainable AI (XAI).

1️⃣ Increases AI Transparency

AI systems often operate on very complex algorithms, making their decision process difficult to understand.

However, Explainable AI (XAI) makes it possible to identify which factors the AI system considered most heavily in making a prediction or decision. This makes the AI’s working process more transparent.

When the decision process is clear, it makes the technology easier to understand for both developers and users.

2️⃣ User Trust Increases

Public trust is crucial for the widespread adoption of any technology. If an AI system simply produces results without a clear reasoning, users cannot fully trust the system.

Explainable AI (XAI) helps users understand why the system made those decisions by explaining them. When people understand the logic behind the decisions, their trust in AI technology increases.

3️⃣ Bias Detection is Possible

AI systems can sometimes make biased decisions, especially when training data is not balanced. Bias means that the AI system may behave unfairly towards certain people or situations.

With Explainable AI (XAI), developers can identify which factors the AI is overestimating when making decisions. If a feature is leading to an unfair result, it can be easily detected.

In this way, Explainable AI (XAI) helps make AI systems more fair and responsible.

4️⃣ AI Debugging Becomes Easier

When an AI model encounters an error or unexpected result, it can often be difficult to fix because developers often don’t understand the specific component of the problem.

Explainable AI helps XAI developers understand which features or calculations are involved in the model’s decision-making. This makes it easier to identify errors and improve the AI system.

5️⃣ Helps with Regulatory Compliance

Many countries are developing new rules and regulations regarding AI technology. These rules aim to ensure that AI systems operate in a fair, safe, and transparent manner.

In sectors such as finance, healthcare, and insurance, explaining AI decisions may be necessary.

In such situations, Explainable AI helps XAI organizations meet regulatory requirements by clearly explaining the logic behind AI decisions.

A Brief Conclusion

Simply put, Explainable AI (XAI) makes AI technology more transparent, trustworthy, and responsible. This not only increases user trust, but also allows developers and organizations to use AI systems more effectively and safely.

Challenges of Explainable AI

Just as every technology has its advantages, it also presents challenges. Explainable AI (XAI) aims to make AI systems transparent and understandable, but implementing it isn’t always easy. Sometimes AI models are so complex that their decisions are difficult to fully explain.

Below are some of the key challenges associated with Explainable AI (XAI) explained in simple terms.

Complex Models Are Difficult to Explain

Many AI systems today rely on advanced models like Deep Learning and Neural Networks. These models analyze millions of data points to make predictions.

The problem is that these models contain many layers and calculations. Therefore, it becomes difficult to understand the logic used by the AI to reach a specific decision.

This is where Explainable AI (XAI) faces challenges, as it isn’t always possible to explain the decisions of complex models in a clear and simple manner.

Accuracy vs. Explainability

A common challenge in AI development is striking a balance between accuracy and explainability.

Some AI models are very simple, such as decision trees or linear models. They are easy to understand, making them explainable. However, their accuracy is often not as good as that of complex models.

On the other hand, deep learning models can produce more accurate results, but they are harder to explain.

Therefore, developers often have to decide whether they want more accuracy or more explainability. This trade-off poses a major challenge for Explainable AI (XAI).

Performance Issues

When an AI system has to explain its decisions, it has to perform additional calculations and analysis. This can impact the system’s processing speed.

For example, if an AI model has to generate a detailed explanation for each prediction, this process can be quite slow.

For this reason, implementing Explainable AI (XAI) can be technically challenging in some situations, especially when the system has to make real-time decisions.

A Brief Conclusion

Although Explainable AI (XAI) helps make AI technology more transparent and trustworthy, it is not easy to fully implement. Challenges such as complex models, the balance between accuracy and explainability, and performance issues remain.

Nevertheless, researchers and developers are constantly working on new methods to make Explainable AI (XAI) more effective and practical.

Explainable AI vs. Traditional AI

Explainable AI vs. Traditional AI

Traditional AI models have long been used in the world of Artificial Intelligence. These models analyze data and make predictions or decisions, but it’s often unclear what the AI based its decisions on. The concept of Explainable AI (XAI) emerged to address this problem.

The primary objective of Explainable AI (XAI) is to make AI systems more transparent and understandable, so that humans can understand how the AI reached a particular result. When AI decisions are understandable, trust in the technology becomes easier.

Below is a simple comparison that can easily illustrate the difference between Traditional AI and Explainable AI (XAI).

| Feature | Traditional AI | Explainable AI XAI |

|---|---|---|

| Transparency | Low – The AI decision process is unclear | High – The AI can explain the reasoning behind its decisions |

| Trust | Limited – Users do not fully trust the AI | High – Clear decisions increase trust |

| Decision Clarity | The AI provides a result but does not explain the logic | AI also explains the reasoning behind the decision along with the result |

| Bias Detection | Bias is difficult to detect | Bias becomes easier to identify |

To put it simply, traditional AI often acts like a black box. We see the input and output, but it is difficult to understand how the decision was made in between.

On the other hand, Explainable AI XAI makes AI systems more transparent and human-friendly. It not only provides predictions, but also explains the factors that informed the prediction.

For this reason, Explainable AI XAI is being rapidly adopted in many industries—such as healthcare, banking, and finance. Because when AI decisions are clear and explainable, it becomes easier for both businesses and users to trust the technology.

Future of Explainable AI

Artificial Intelligence is constantly evolving rapidly. Today, AI is not limited to technology companies, but is being used in many fields such as healthcare, banking, education, and transportation. As the use of AI increases, it is becoming increasingly important for AI systems to be able to explain their decisions in a clear and understandable manner.

For this reason, Explainable AI (XAI) is considered the future of AI. In the future, AI systems will be expected to deliver not only accurate results but also clear explanations. Below are some important trends that indicate that the role of Explainable AI (XAI) will become even more important in the future.

1️⃣ AI Regulations Will Increase

Governments and organizations around the world are now working to develop new rules and policies regarding AI technology. These regulations aim to ensure that AI systems operate in a safe, fair, and transparent manner for people.

There are many sectors where AI decisions can impact people’s lives, such as finance, healthcare, and insurance. Therefore, in the future, it may be necessary for AI systems to provide clear explanations for their decisions.

This is where Explainable AI (XAI) becomes crucial, as it helps explain AI decisions and helps organizations comply with regulations.

2️⃣ Explainable AI will grow in importance in Healthcare

The use of AI is rapidly increasing in the healthcare sector, such as in disease detection, medical imaging analysis, and diagnosis. However, medical decisions are very sensitive, so it is important for doctors to understand the basis on which AI diagnoses a disease.

In the future, the role of Explainable AI (XAI) may become even more important in hospitals and medical research. This will help doctors better understand AI recommendations and make safer decisions for patients.

3️⃣ Responsible AI Movement will grow

Today, a new concept is gaining popularity in the technology world called Responsible AI. This means that AI systems should operate in an ethical, fair, and transparent manner.

Explainable AI (XAI) is an important part of Responsible AI. Because when AI explains its decisions, developers can ensure that the system is acting in an unbiased and responsible manner.

In the future, companies and organizations will focus more on Responsible AI, which will increase the demand for Explainable AI (XAI).

4️⃣ AI Transparency Will Become a Future Requirement

As AI systems become more integrated into people’s daily lives, transparency will become a necessary requirement. People will want to know the reasons behind the decisions AI systems are making for them.

For this reason, in the future, Explainable AI (XAI) will not be just an optional feature but may become a standard requirement for AI development.

A Brief Conclusion

Simply put, the focus of AI technology in the future will not only be on creating powerful algorithms, but also on creating transparent and trustworthy AI systems.

Explainable AI (XAI) will play an important role in this direction, as it makes AI technology more understandable, safe and reliable for humans.

Conclusion

Artificial Intelligence has become one of the most powerful technologies in today’s world. It’s helping make decisions in healthcare, banking, education, and many other industries. But having powerful AI isn’t enough—transparency and trust are equally important.

This is where Explainable AI (XAI) comes into play. It not only makes AI systems smart, but also makes their decisions understandable. When people understand why an AI made a decision, their trust in the technology increases.

In the future, AI systems will be expected not only to make accurate predictions, but also to provide clear explanations. Therefore, many experts believe that Explainable AI (XAI) could become a core part of future AI systems.

Simply put, the future of AI won’t be just about intelligent systems, but also about transparent and responsible AI—and Explainable AI (XAI) is an important step in this direction.

If you want to learn more about AI technologies like Explainable AI (XAI), follow our blog for regular AI insights and easy-to-understand AI guides.